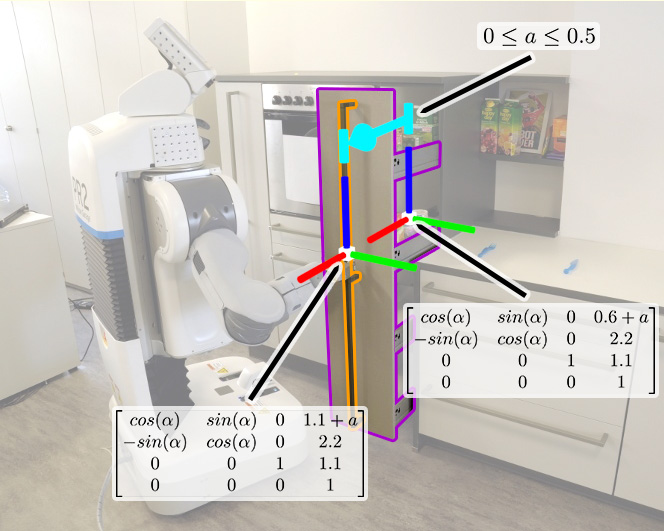

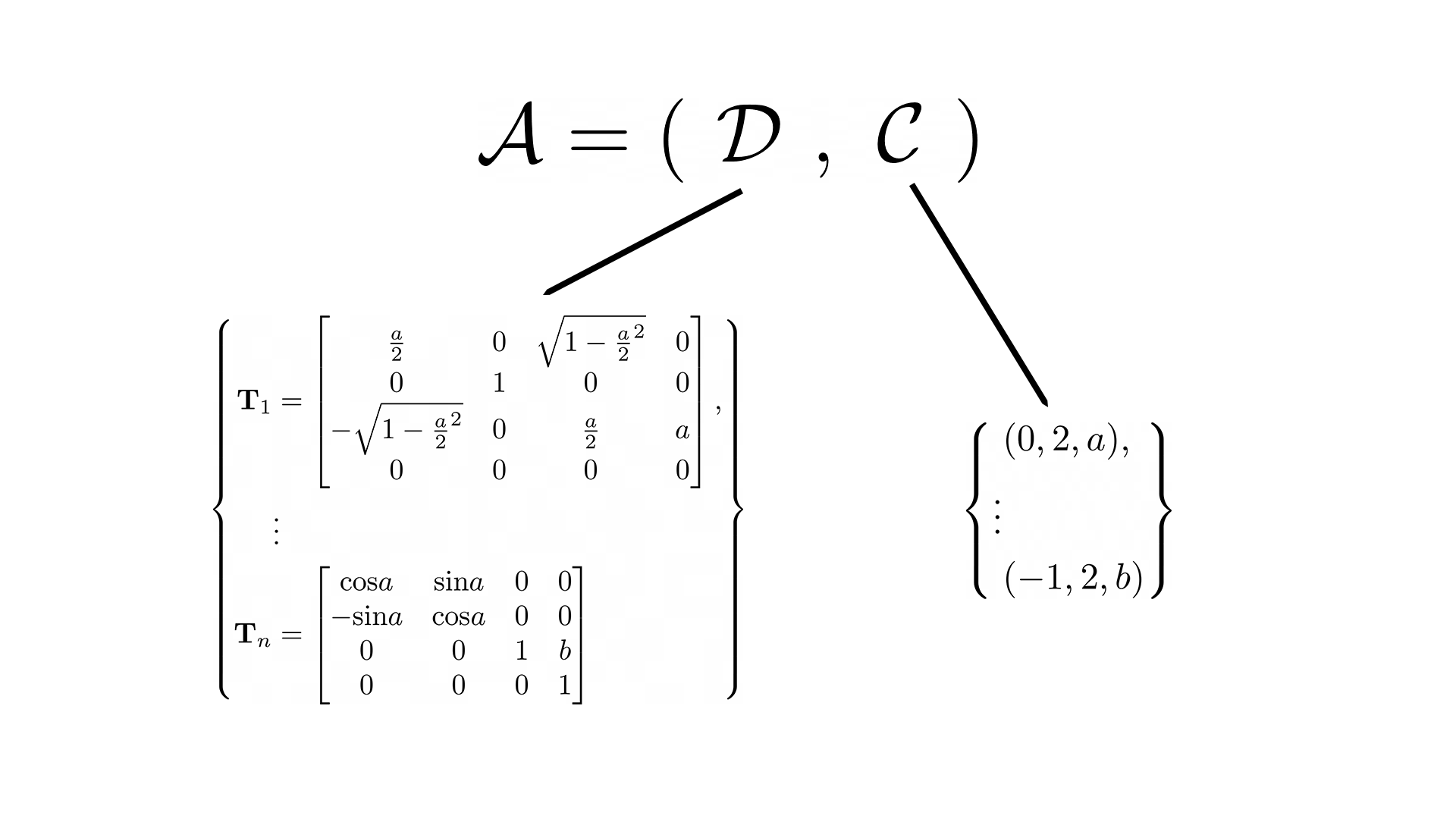

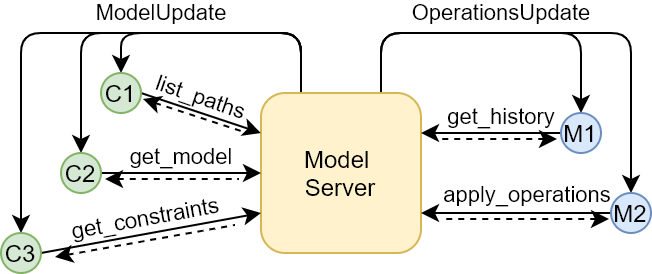

Service robots in the future need to execute abstract instructions such as "fetch the milk from the fridge". To translate such instructions into actionable plans, robots require in-depth background knowledge. With regards to interactions with doors and drawers, robots require articulation models that they can use for state estimation and motion planning. Existing articulation model frameworks take an abstracted approach to model building, which requires additional background knowledge to construct mathematical models for computation. In this paper, we introduce a novel framework that uses symbolic mathematical expressions to model articulated objects. We provide a theoretical description of this framework, and the operations that are supported by its models, and introduce an architecture to exchange our models in robotic applications, making them as flexible as any other environmental observation. To demonstrate the utility of our approach, we employ our practical implementation Kineverse for solving common robotics tasks from state estimation and mobile manipulation, and use it further in real-world mobile robot manipulation.

Kineverse: A Symbolic Articulation Model Framework for Model-Agnostic Mobile Manipulation

Adrian Röfer, Georg Bartels, Wolfram Burgard, Abhinav Valada, Michael Beetz